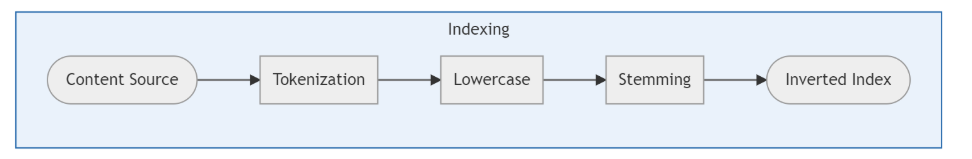

Crawling and Indexing Content Sources

This article provides an overview of how SearchUnify crawls and indexes content sources.

Overview

The sole job of SearchUnify is to find information and it goes about it not the way most people imagine.

Unlike CTRL+F, which matches a keyword with a text on a page, SearchUnify doesn't even bother to go through all documents on a content source each time a user searches. Instead, its rummages through its own bare-bones copy of each document, called an index.

When you connect a Salesforce org, Khoros community, website, or another supported platform as a content source with your instance, SearchUnify finds all the documents stored on your content source, processes them, and creates a copy of them. The discovery job is crawling, processing is indexing, and the resultant copy is an index.

Crawling is straightforward, it's indexing that demands engineering skills and lots of innovation and creativity. Consider these four crawled documents.

D1. The Martians write about cats.

D2. Natsume Soseki wrote a novel on cats.

D3. My cat cannot write.

D4. It's written in the book that cats are wild animals.

Storing them as they are will be memory intensive. So SearchUnify breaks the text in each document into short strings, also known as tokens.

Tokenization

A really primitive way to create tokens is to use word boundaries. Despite being easy, it is seldom used because the tokens aren't always what we humans would want.

"Natsume Soseki" in D2 is a writer. It's incorrect to part his name into two tokens. Ditto with "wild animals" in D4.

D1. The | Martians | write | about | cats.

D2. Natsume | Soseki | wrote | a | novel | on | cats.

D3. My | cat | cannot | write.

D4. It's | written | in | the | book | that | cats | are | wild | animals.

And we haven't even considered languages such as Chinese, Japanese, or Thai where only sentence boundaries exist.

D5. 北冥有魚其名為鯤。

A primitive tokenizer will find only one token in D5, when it has several. Consider its English translation, "In the Northern Lake lives a fish whose name happens to be Kun."

SearchUnify's tokenizer is smarter. It can detect collocations and tokenize accordingly. Although the exact details are a trade secret, an approximate tokenization of the first four documents might resemble this.

D1. The Martians | write | about cats.

D2. Natsume Soseki | wrote | a novel | on cats.

D3. My cat | cannot | write.

D4. It's | written | in | the book | that | cats | are | wild animals.

Conversion to Lowercase and Stopwords Removal

Gustave Eiffel * born * Dijon, France * 1832 * become * architect * constructed * Eiffel Tower.

Gustave Eiffel was born in Djion, France in 1832 and became an architect who constructed the Eiffel Tower.

Those asterisks mar the sentence's beauty but they don't really hinder comprehension. They are placeholders for stopwords. During indexing, SearchUnify removes all stopwords, punctuation, and converts the tokens into lowercase.

Our index might look like this.

D1. martians | write | cats

D2. natsume soseki | wrote | novel | cats

D3. cat | cannot | write

D4. written | book | cats | wild animals

This technique has a few drawbacks, such as the handling of entire phrases made from stopwords, such as Shakespeare's "To be or not to be." and the eliminating the difference between "us" and "the U.S." Nonetheless, clever programmers can find a way to overcome these shortcomings.

Stemming

Are "sing" and "sang" two words or one? This isn't the musing of a ruminating philosopher on a far-off island, but something lexicographers and search engine designers have to grapple with. Their solutions is to group words into lexemes. read, reader, reading can all be put under the lexeme READ.

D1. martian | write | cat

D2. natsume soseki | write | novel | cat

D3. cat | cannot | write

D4. write | book | cat | wild animal

The accuracy of stemming depends on language detection. It is relatively easy in English and Chinese. But in languages with rich morphology, such as German and Russian, stemmers can be extremely sophisticated pieces of engineering.

A COMPUTER can be computer or computers in English. But in Russian KOMPYUTER can take 12 forms: kompyuter, kompyutera, kompyuteru, kompyuter, kompyuterom, o kompyutere, kompyutery, kompyuterov, kompyutery, kompyuteram, kompyuterami, o kompyuterah. Each noun in Russian is inflected in 12 ways, instead of 2 in English.

Inverted Index

Once the stemming is complete, the index is inverted. It means that instead of listing keywords for each document, SearchUnify lists documents for each keywords. Here's what an inverted index looks like.

| Keyword | Occurance | Document |

| martian | 1 | D1 |

| write | 4 | D1, D2, D3, D4 |

| cat | 4 | D1, D2, D3, D4 |

| natsume soseki | 1 | D2 |

| novel | 1 | D2 |

| cannot | 1 | D3 |

| book | 1 | D4 |

| wild animal | 1 | D4 |

When a user searches, SearchUnify finds documents in this index, not in the content source. A search for "novel" will return D3 and a search for "wild animals" D4.

At this point, you might be thinking what will happen if someone were to search "cat" which is in all the four docs? Well, other factors come into pay in that scenario, such as the keyword's position and its field (title, tag or somewhere else), user permissions, and others which will be covered in the next section. For now, it's safe to assume that D3 will come up on top because cats is the first word in the document.

Related